I’m trying to implement this method for rendering thick glass into Unity:

http://prideout.net/blog/?p=51

( Note that I do not want Light Absorption or Fresnel in my shader! I’m just trying to do depth for now. )

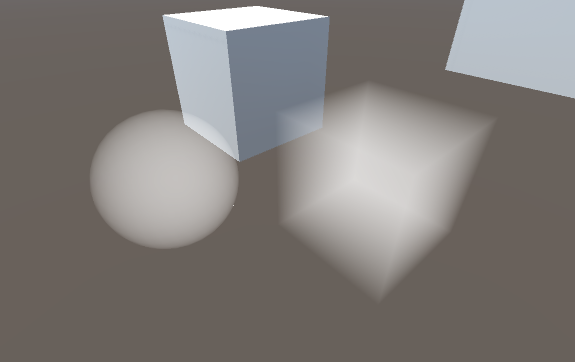

My idea was to add a secondary camera to the scene which is parented to the main camera and renders to a RenderTexture using a shader like the one described in the article. I would exclude the glass object layer from the main camera and include only the glass layer in the secondary camera. So the RenderTexture would look something like this:

Then I would just overlay the RenderTexture result on top of the main camera view with additive blending and color it if I’d like.

The issue I’m having is with writing the shader. I don’t really know what I’m doing when it comes to transforming my vert positions with the matrices, this is what I’ve cobbled together from other people’s code. Here is the best I’ve got so far:

Shader Code

]

Shader "Thickness"

{

Properties

{

}

SubShader

{

Tags{ "RenderType" = "Opaque" }

//LOD 200

Pass

{

Lighting Off

Fog{ Mode Off }

CGPROGRAM

#pragma vertex vert

#pragma fragment frag

#pragma fragmentoption ARB_precision_hint_fastest

struct a2v

{

float4 vertex : POSITION;

fixed4 color : COLOR;

};

struct v2f

{

float4 pos : SV_POSITION;

half dist : TEXCOORD0;

};

v2f vert(a2v v)

{

v2f o;

o.pos = mul(UNITY_MATRIX_MVP, v.vertex);

float4 temp = mul(UNITY_MATRIX_IT_MV, v.vertex);

o.dist = temp.z;

return o;

}

fixed4 frag(v2f i) : COLOR

{

float depth = i.dist;

return half4(depth, depth, depth, 1);

}

ENDCG

}

Pass

{

//BlendOp Sub

//Blend One One

Lighting Off

Fog{ Mode Off }

Cull Front

ZTest Greater /* This was needed to render the back faces on top of the front faces */

CGPROGRAM

#pragma vertex vert

#pragma fragment frag

#pragma fragmentoption ARB_precision_hint_fastest

struct a2v

{

float4 vertex : POSITION;

fixed4 color : COLOR;

};

struct v2f

{

float4 pos : SV_POSITION;

half dist : TEXCOORD0;

};

v2f vert(a2v v)

{

v2f o;

o.pos = mul(UNITY_MATRIX_MVP, v.vertex);

float4 temp = mul(UNITY_MATRIX_IT_MV, v.vertex);

o.dist = temp.z;

return o;

}

fixed4 frag(v2f i) : COLOR

{

float depth = 1 - i.dist;

return half4(depth, depth, depth, 1);

}

ENDCG

}

}

FallBack Off

}

What I have here is my attempt at a 2-pass shader. First a normal Back face culled pass where the pixels are colored by depth. Then the 2nd pass the Front faces are Culled and the depth reversed. Then you can see I tried to change the blending mode to have the 2nd pass’s dark pixels SUBTRACT from the lightness of the first pass’s pixels. I was unsuccessful in figuring out how to do this.

Basically I want the shader to render the front faces colored according to the depth. The closer to the camera the whiter the pixels should be. I also want the depth to be scaled according to the object’s boundaries so that the furthest back pixel is pure black and the closest is pure white, I don’t know how to do this. I basically want the depth not to be dependent on the camera proximity.

The second pass should work the same but with front faces culled and the depth inversed so black is closer and white is further away. Then I want this 2nd pass to blend with the first pass in such a way that the white pixels don’t change the destination pixel color but the darker pixels should darken the pixel colors of the first pass.

I’m trying to do exactly what the shader in the article I linked does. So can anyone help me figure out how to pull this together?

Thanks in advance!